Guidance to Improve Patching and Vulnerability Management

The core idea of patching timelines is very old and is based on the idea that vulnerabilities should be addressed in a priority order. This pattern was then picked up by the PCI standards which became a common requirement.

However, the technical drivers behind the pattern have changed and it is time for practices to change as well. This piece details how to succeed against today’s adversaries by making serious and significant patching changes in your environments and practices using automation of deployment and testing.

Patching Automation Issues

Patching has gotten safer as vendors have split feature releases from security patches, making patching itself less risky, and reducing the justification of pushback from internal teams. At the same time, automation technology has made patching easier. On the detection side, environments have grown significantly more complex, potentially reducing the validity of a “critical” or “high” finding, while attacker capabilities have increased, allowing automated analysis of patches to find areas to target and the ability to rapidly chain lower-severity issues together into a more-significant concern.

All told, this means the factor of concern is no longer patch severity but is, instead, exploitability.

Patching Exploitability and Psychological Pinning

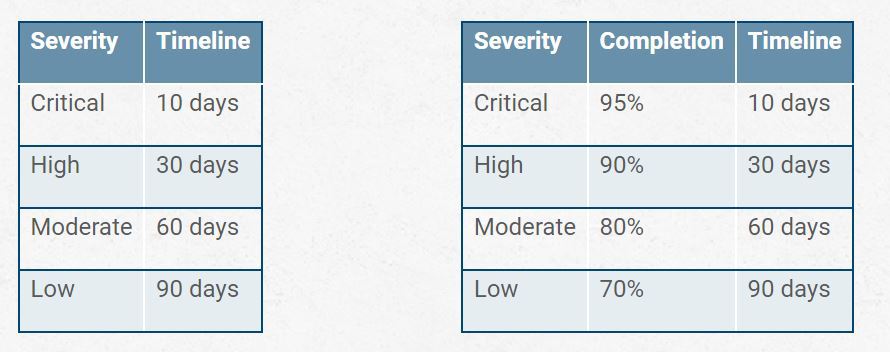

It’s common to see tables like these in vulnerability management programs, with the one on the right being far less common but important in organizations that are attempting to modernize but still constrained to contracts and standards with a patch timeline concept. (See Table 1)

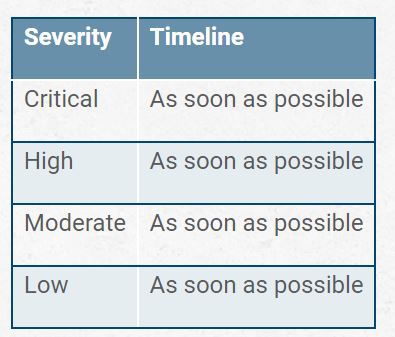

The problem is that even if you are setting an expectation that 95% of critical findings are to be patched within 10 days, leaving a buffer for more complex issues, the way such a rule is interpreted by most people is that patches do not need to be deployed until Day 9. In more mature organizations, they may take the form of patches being delayed to the next weekly patch window, still within the SLA. This is the psychological concept of “pinning,” where if someone hears that “high vulnerabilities should be patched in 30 days,” they are much more likely to deploy the patch on Day 28 than they are on Day 2. After all, on Day 2, there’s a lot of time before they have to do it. This means whatever the timeline says, all you’re doing is giving more time for attackers to target the issue. The correct table should look like this:

This table is obviously not very useful in a business context, but illustrative of the core conflicts we face.

Patching Core Conflict

The fundamental conflict of patching is that we (1) accept the fact that humans aren’t error-proof and they’ll make mistakes, and (2) that we somehow believe they’ll fix their errors correctly in a patch.

The fundamental conflict of vulnerability scanning is that we (1) accept the fact that companies that focus on finding flaws in software are good at what they do, with collecting data from third parties to scan our environments, and (2) we somehow believe that scanners will find and report the issues to us so they can be fixed before the attackers can take advantage of them.

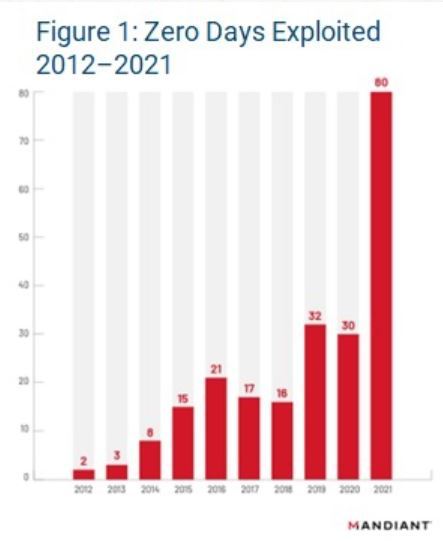

These conflicts are nonsensical, but they underpin the common business practice of patching within time windows, meaning the entire practice is built like a house of cards, ready to topple with the first faulty patch or zero-day exploit, which are on a significant rise, as seen in Figure 1.

Worse, we have a fundamental conflict with exploitation as well, where we simultaneously believe that attackers are (1) supremely skilled and can release working exploit code quickly after a patch is released, and (2) utterly incompetent and won’t be able to compromise our systems within the patch windows, ignoring all evidence of zero-day attacks and vulnerability chaining.

Using a Risk-Based Approach to Patching

We see a lot of claims around organizations taking a “risk-based approach” to vulnerability management, coupled with a timeline table. Clearly, the risk they’re addressing is “risk of not meeting the SLA” as opposed to “risk of compromise.” Taking a true risk-based approach to vulnerability management requires a focus on automation, contextualization and compartmentalization. Putting any timeframe at all on a manual patching process—whether it involves scheduling and vetting—just serves to delay the one critical action: making a potentially vulnerable system unable to be compromised from a known attack.

Pushing the timelines without investing in the ability to automate patching—including deployment along with automated testing, rollback and configuration of compensating controls—only serves to burn out the team, causing people to leave, which makes it even harder to meet the arbitrary timelines, while also making it harder to adopt the automation and infrastructure redesigns needed to support the contextualization and compartmentalization practices needed to support a nimble automation model.

Vulnerability and Patching Best Practices

For this to work, you need to fundamentally rethink how you handle vulnerability management and move beyond basic patching:

- Truly segment your environment: Segmentation makes it harder to chain vulnerabilities, microsegmentation and zero trust make it much harder.

- Invest in testing: You need the ability to quickly verify that an altered system can perform its functions. This means a significant investment in testing multiple scenarios. This cannot be done manually if you want to scale.

- Consider blue/green environments: In a blue/green environment, you can flip between systems/configurations quickly to deploy patches in real time without downtime and with verification. This design is critical for rapid vulnerability management.

- Pre-analyze contexts: When a high-profile patch comes out, it is too late to determine which systems are at risk. The systems should already be contextualized, so you can—within minutes—understand the impact on your environment and schedule the automated patch test process before the attackers do.

- The attackers are significantly more invested in automation and target identification than most organizations. It doesn’t matter what numbers you put into a table. Until you invest similarly, you’ll start to lag behind and will continue to fall behind faster every day.

Although reasonable efforts will be made to ensure the completeness and accuracy of the information contained in our blog posts, no liability can be accepted by IANS or our Faculty members for the results of any actions taken by individuals or firms in connection with such information, opinions, or advice.